LG AI Research has unveiled EXAONE 3.0, the first South Korean AI model with open-source access. The model currently supports two languages—English and Korean—and is built on the Transformer architecture, focusing on decoders. EXAONE 3.0 features 7.8 billion parameters and was trained on 8 trillion tokens.

LG reports that EXAONE 3.0 includes a specialized version with 7.8 billion parameters designed to adapt to user queries, making it particularly useful for scientific research. The company hopes that this launch will drive deeper AI research both within South Korea and internationally, fostering the growth of the AI ecosystem.

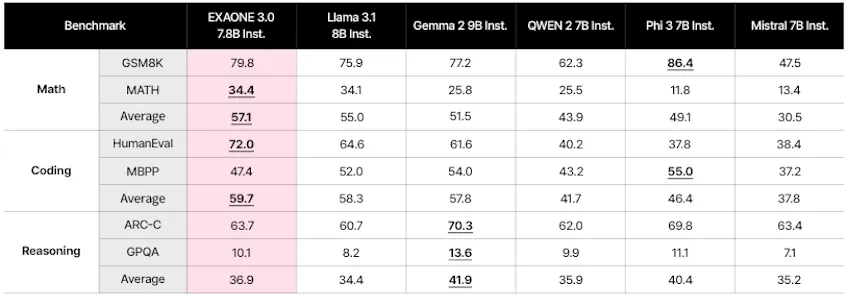

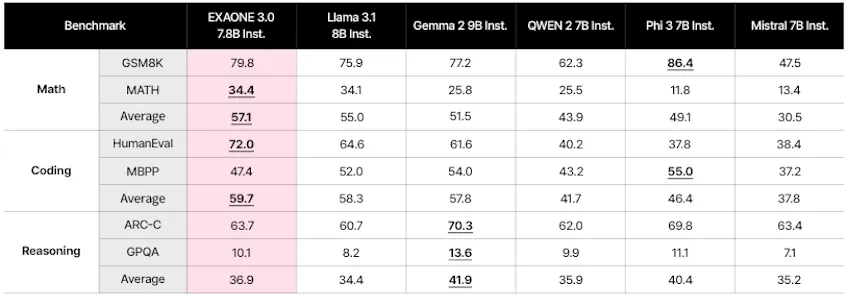

Testing has shown that EXAONE 3.0 leads among language models, outperforming other solutions like Llama 3.0, and excels in mathematical calculations and programming tasks. Compared to previous models, EXAONE 3.0 reduces output time by 56%, memory consumption by 35%, and operational costs by 72%.

The latest version of EXAONE 3.0 was trained on 60 million examples, including data related to patents, code, mathematical problems, and chemical formulas. LG plans to expand the training data to 100 million examples across various fields by the end of the year.

To reduce energy consumption, LG AI Research focused on optimization technologies, achieving a 97% reduction in model size while significantly improving performance over EXAONE 1.0.