Amazon has rolled out an updated version of its proprietary image-generating model, Titan Image Generator, for AWS customers utilizing the Bedrock generative AI platform.

Dubbed Titan Image Generator v2, this new iteration introduces a range of enhanced features, as detailed by AWS principal developer advocate Channy Yun in a blog post. Users can now “guide” their generated images with reference visuals, modify existing images, remove backgrounds, and create various image versions, according to Yun.

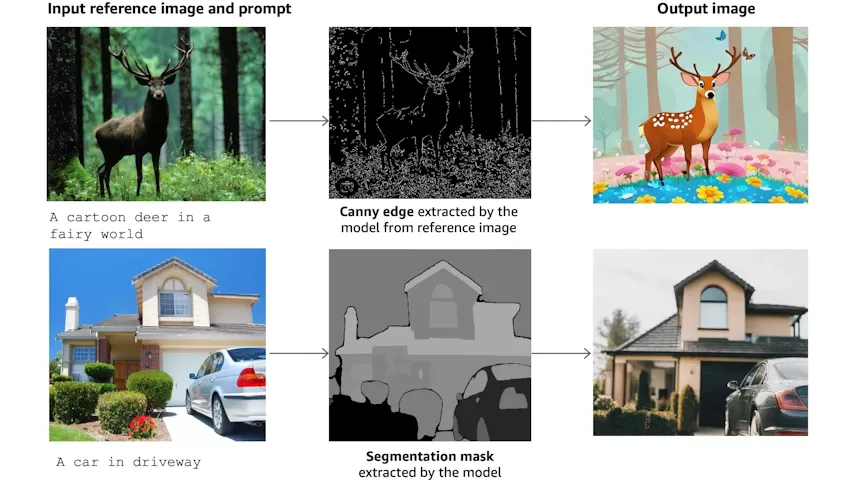

“Titan Image Generator v2 can intelligently detect and segment multiple foreground objects,” Yun explains. “With the Titan Image Generator v2, you can generate color-conditioned images based on a color palette. [And] you can use the image conditioning feature to shape your creations.”

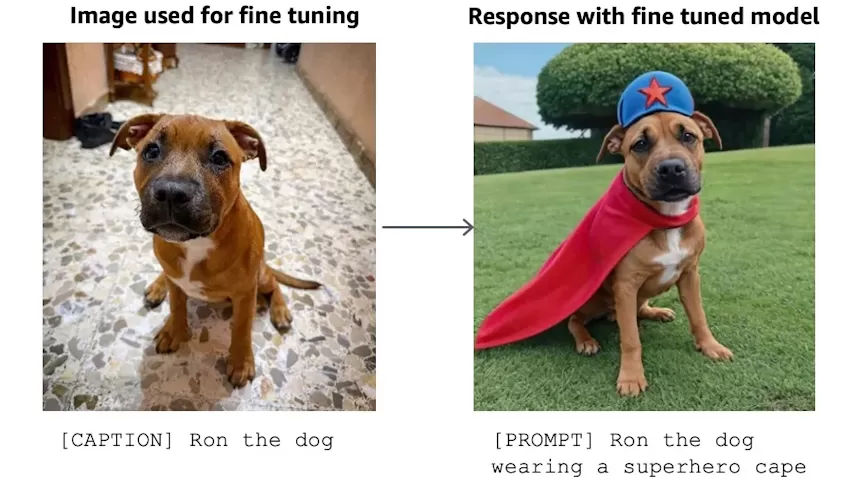

The model supports image conditioning, which allows it to take in a reference image and focus on specific visual elements such as edges, object outlines, and structural components. It can also be fine-tuned using reference images, like a product or company logo, to ensure that the generated images maintain a consistent look and feel.

AWS has remained vague about the exact data used to train its Titan Image Generator models. The company has previously indicated to TechCrunch that it employs a mix of proprietary and licensed data.

Few vendors disclose such information readily, viewing their training data as a competitive edge and thus keeping it confidential. Details about training data can also be a potential source of intellectual property-related lawsuits, which further discourages transparency.

Instead of full disclosure, AWS offers an indemnification policy that protects customers if a Titan model, like Titan Image Generator v2, produces a near-identical copy of a potentially copyrighted training example.

In a recent second-quarter earnings call, Amazon CEO Andy Jassy expressed strong confidence in generative AI technologies like AWS’ Titan models, despite some hesitation from enterprises and the increasing costs associated with training, fine-tuning, and deploying these models.

“In the generative AI space, it’s going to get big fast,” he stated, “and it’s largely all going to be built from the get-go in the cloud.”